Anthropic Launches AI Code Review Tool to Handle Surge of AI-Generated Code

Key Facts

- What: Anthropic released Code Review, a multi-agent AI tool that automatically analyzes pull requests generated by Claude Code, focusing on logical errors, bugs, and severity-ranked issues.

- When: Launched Monday in research preview for Claude for Teams and Claude for Enterprise customers.

- Availability: Integrates directly with GitHub; can be enabled by default for entire engineering teams.

- Pricing: Token-based; average cost per review estimated at $15–$25 depending on code complexity.

- Architecture: Uses multiple AI agents working in parallel, with a final aggregator that removes duplicates and ranks findings by severity (red, yellow, purple).

Anthropic has launched Code Review, an AI-powered tool designed to automatically review the growing volume of code produced by its Claude Code system and catch bugs before they reach production. The product addresses a key pain point for enterprise customers: the dramatic increase in pull requests created by AI coding tools, which has created review bottlenecks and introduced new risks around quality, security, and maintainability.

The tool launched Monday and is initially available in research preview to Claude for Teams and Claude for Enterprise users. It integrates with GitHub to scan pull requests, leave inline comments, and provide step-by-step explanations of identified issues. According to Cat Wu, Anthropic’s head of product, the feature was developed in direct response to feedback from enterprise leaders using Claude Code.

Addressing the 'Vibe Coding' Challenge

The rapid adoption of AI coding assistants has transformed software development. Developers now use natural language prompts to generate large volumes of code quickly — a practice sometimes called “vibe coding.” While this accelerates feature development, it has also led to more bugs, security vulnerabilities, and code that developers do not fully understand.

Wu told TechCrunch that Claude Code has significantly increased code output, resulting in a surge of pull requests that overwhelm traditional human review processes. “We’ve seen a lot of growth in Claude Code, especially within the enterprise, and one of the questions that we keep getting from enterprise leaders is: Now that Claude Code is putting up a bunch of pull requests, how do I make sure that those get reviewed in an efficient manner?” she said.

Code Review is Anthropic’s direct answer to that bottleneck. The tool is targeted at large-scale enterprise users including Uber, Salesforce, and Accenture, which already rely heavily on Claude Code. Engineering leads can enable it by default across their teams, ensuring every pull request receives automated analysis.

Multi-Agent Architecture and Review Process

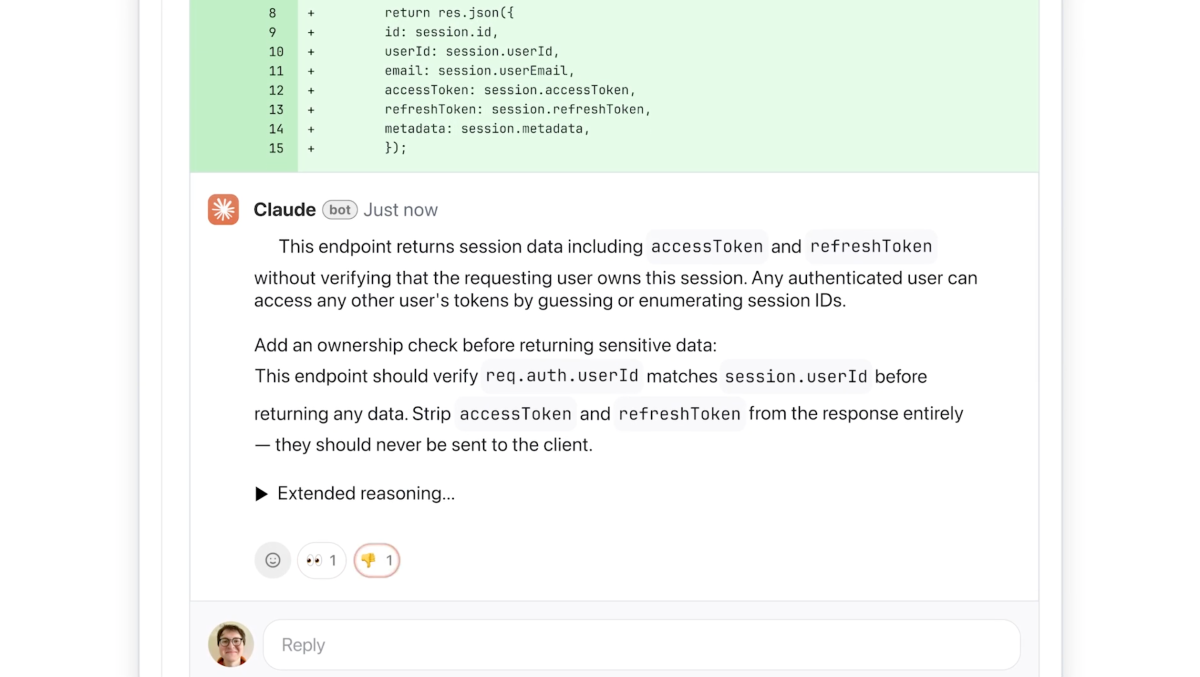

Unlike simpler automated code review tools that often focus on style or surface-level issues, Anthropic’s Code Review prioritizes logical errors and high-impact bugs. Wu emphasized that previous AI review systems frequently annoyed developers with non-actionable or false-positive feedback. “We decided we’re going to focus purely on logic errors. This way we’re catching the highest priority things to fix,” she said.

The system employs a multi-agent architecture. When a pull request is opened, multiple AI agents analyze the code in parallel from different perspectives. These agents identify potential issues, cross-verify findings to reduce false positives, and then feed results to a final aggregator agent. This aggregator removes duplicate reports, ranks issues by importance, and generates a single coherent overview comment along with inline annotations.

Reviews include step-by-step reasoning: the tool explains what the issue is, why it could be problematic, and how it might be fixed. Issues are color-coded by severity:

- Red for highest severity problems that should be addressed immediately

- Yellow for potential issues worth reviewing

- Purple for problems related to pre-existing code or historical bugs

The tool also provides a lightweight security analysis. For deeper security scanning, Anthropic points customers to its recently launched Claude Code Security product, which offers more comprehensive vulnerability detection and patch suggestions.

Pricing and Enterprise Focus

Because the multi-agent system is computationally intensive, pricing follows a token-based model common to frontier AI services. Wu estimated that a typical review would cost between $15 and $25, though the exact price varies with code complexity and size. The company positions Code Review as a premium experience necessary to manage the scale of AI-generated code.

This launch comes at a critical time for Anthropic. On the same day as the Code Review announcement, the company filed two lawsuits against the Department of Defense after being designated a supply chain risk. The legal dispute is expected to push Anthropic to lean more heavily on its commercial enterprise business, which has grown rapidly. Claude Code’s run-rate revenue has surpassed $2.5 billion since launch, and enterprise subscriptions have quadrupled since the beginning of the year.

Internal Adoption and Developer Experience

Anthropic has been using a similar review system internally. Wu noted that the company’s own developers have come to expect Code Review comments on their pull requests and “get a little nervous” when they don’t appear. This internal validation helped shape the product’s focus on high-signal, actionable feedback rather than nitpicky style suggestions.

The emphasis on logical correctness over style was informed by developer feedback across the industry. “We found that in AI-generated reviews, people really just want the logic errors to start with,” Wu explained. By keeping the false positive rate low, the tool aims to maintain developer trust and encourage adoption.

Competitive Context in AI-Assisted Development

Anthropic’s move reflects a broader industry trend: as generative AI tools flood codebases with machine-generated code, new layers of AI review and validation are becoming essential. Traditional static analysis and human code review processes were not designed for the volume and characteristics of AI-generated pull requests.

The tool’s GitHub integration allows it to fit naturally into existing developer workflows. Comments appear directly in the pull request interface, making the AI reviewer feel like an additional team member rather than an external system.

While other companies have released AI coding assistants and some security-focused code scanners, Anthropic’s multi-agent approach for comprehensive logical review represents a notable step toward specialized AI tools that manage the downstream effects of widespread AI code generation.

Impact on Development Teams

For engineering organizations, Code Review offers a way to maintain code quality without proportionally increasing headcount for review tasks. By automating the first pass of analysis, human reviewers can focus on higher-level architecture, business logic, and strategic decisions rather than hunting for basic logical errors.

The ability to customize additional checks based on internal best practices gives teams flexibility to enforce company-specific standards. The color-coded severity system helps prioritize work, while step-by-step explanations help less experienced developers learn from the AI’s reasoning.

Wu described the product as something driven by “an insane amount of market pull.” As more engineers use Claude Code to build features rapidly, the friction of reviewing that output has become a major bottleneck. Code Review aims to remove that friction and allow teams to ship faster with confidence.

What's Next

The tool is currently in research preview, limited to Claude for Teams and Enterprise customers. Anthropic has not yet announced a timeline for broader availability or general release. Further enhancements could include tighter integration with additional version control platforms, more sophisticated security analysis capabilities, and performance improvements that reduce the per-review cost.

As AI coding tools continue to evolve and generate ever-larger volumes of code, products like Code Review are likely to become standard components of the modern development stack. The launch signals Anthropic’s commitment to building not just code generation capabilities but also the supporting infrastructure needed to use AI-generated code responsibly at enterprise scale.

The company’s focus on logical correctness and low false-positive rates may set a benchmark for future AI review tools. If successful, Code Review could help address one of the most pressing challenges in AI-assisted software engineering: ensuring that speed does not come at the expense of quality and security.

Sources

- TechCrunch: Anthropic launches code review tool to check flood of AI-generated code

- The New Stack: Anthropic launches a multi-agent code review tool for Claude Code

- VentureBeat: Anthropic rolls out Code Review for Claude Code as it sues over Pentagon blacklist and partners with Microsoft

- Anthropic internal usage and Claude Code Security references via related coverage