The short version

Anthropic's Code Review is an AI-powered tool that automatically checks code generated by their Claude Code system for bugs, security risks, and logic errors before it gets added to software projects. Launched in research preview for enterprise teams using Claude for Teams or Enterprise, it integrates with GitHub to scan pull requests—essentially code change proposals—and leaves helpful comments with fixes. This tackles the "flood" of AI-generated code that's speeding up development but causing review bottlenecks, costing about $15-25 per review, and it's already a hit with big companies like Uber and Salesforce.

What happened

Imagine you're building a Lego castle with a team. Normally, one person hands over a big section, and everyone else checks it for wobbles or missing bricks before attaching it. But now, picture a super-fast robot (that's AI like Claude Code) cranking out huge chunks of the castle in minutes from your simple instructions—like "make a tower with a drawbridge." It's awesome for speed, but suddenly you've got a mountain of robot-made pieces piling up, and your team can't inspect them all fast enough. Bugs sneak in, the castle gets shaky, and shipping the final product slows to a crawl.

That's the problem Anthropic is solving with Code Review, launched Monday inside their Claude Code platform. Developers using "vibe coding"—just describing what they want in plain English—generate tons of code quickly. This has exploded productivity, with Claude Code's revenue hitting a $2.5 billion run-rate since launch and subscriptions quadrupling this year. But it creates a flood of "pull requests," which are like digital Post-it notes saying, "Hey team, review this code change before we add it." Enterprise leaders kept asking Anthropic's head of product, Cat Wu: "How do we review all these efficiently without delays?"

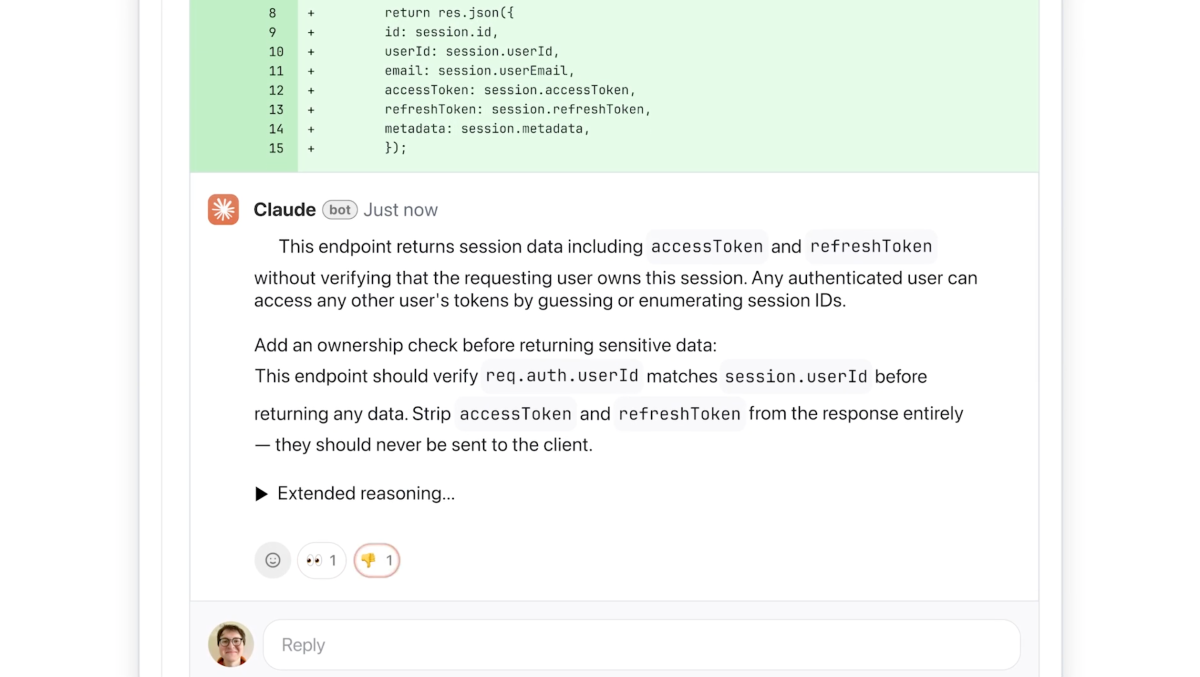

Enter Code Review: an AI "peer reviewer" that jumps in automatically. It hooks into GitHub (a popular site where teams store and collaborate on code) and scans every pull request. Multiple AI "agents"—think of them as specialized detectives—work in parallel, each checking from a different angle: one for logic bugs (does this actually work as intended?), another for security weak spots, and more. They cross-check each other to avoid false alarms, rank issues by severity (red for critical, yellow for watch-out, purple for ties to old problems), and post a tidy summary comment plus inline notes on the code itself. It explains step-by-step: "Here's the bug, why it's bad, and how to fix it." No nagging about style—just actionable logic fixes, which developers love because it's not annoying fluff.

It's resource-heavy (uses lots of computing power), priced per "token" (basically, per chunk of code processed, like words in a document), averaging $15-25 per review depending on complexity. Right now, it's a research preview for paid Claude for Teams and Enterprise users—big outfits like Uber, Salesforce, and Accenture—who can flip it on for their whole team. Anthropic uses something similar internally; their devs even get nervous without its feedback. This launch hits amid company drama: Anthropic sued the U.S. Department of Defense over being labeled a "supply chain risk," pushing them harder into enterprise sales.

Why should you care?

You might not code, but this matters because software runs everything you touch daily—your banking app, ride-sharing service, email, shopping sites, even the smart fridge or car software. AI tools like Claude Code are making development 10x faster, which could mean quicker updates, bug fixes, and new features in your favorite apps. But without checks like Code Review, that speed introduces hidden bugs or security holes, leading to crashes, data breaches, or downtime that disrupts your life (remember when apps glitch during peak hours?).

For everyday folks, cleaner AI-generated code means more reliable tech. Think fewer frustrating app crashes while ordering food, safer online banking without hack risks, or faster rollouts of cool features like better photo editing in your phone's camera app. Big companies adopting this now (with Claude Code's massive growth) signal a shift: AI won't just write code—it'll self-police it. That could lower software costs long-term (fewer human hours wasted on fixes), making services cheaper or more innovative for you. If your job touches tech at all—even indirectly—this reduces "tech debt" that slows company progress.

What changes for you

Practically, nothing flips overnight since this is enterprise-focused (not for solo hobbyists yet). But ripples hit you soon:

-

Apps get tougher and faster: Companies like Uber (your rides) or Salesforce (powers customer service for many brands) use Claude Code + Review. Expect snappier updates without the bugs that used to delay launches. Your DoorDash order confirmation might process flawlessly because AI caught a logic error early.

-

Security you can trust: It flags security issues lightly (deeper scans via their new Claude Code Security tool), coloring them for quick fixes. Fewer breaches mean less worry about personal data leaks—vital when 80% of hacks exploit code flaws.

-

Cost savings trickle down: At $15-25/review, it's a "premium" tool, but for enterprises drowning in pull requests, it cuts bottlenecks. That efficiency could mean lower dev costs passed to you as stable pricing or free upgrades, not endless price hikes to cover fixes.

-

Broader AI reliability: Anthropic's multi-agent setup (parallel agents aggregating findings) sets a standard. Other AI code tools might copy it, making all software—from Netflix recommendations to fitness trackers—more robust. If you're a small business owner or freelancer using no-code tools, this paves the way for pro-level quality without hiring coders.

No direct access for individuals yet—it's Teams/Enterprise only in preview. Watch for wider rollout as demand surges (Wu calls it "insane market pull"). Pricing is token-based, scaling with code size, so simple reviews stay cheap.

Frequently Asked Questions

### What exactly does Code Review check, and how accurate is it?

It focuses on logic errors (bugs where code doesn't work right), not picky style issues, plus light security scans. Multiple AI agents review in parallel, cross-verify to cut false positives, and rank by severity with colors (red=urgent, yellow=check it, purple=old issues). Developers say it's highly actionable—Anthropic's own team relies on it, getting "nervous" without feedback—and keeps false alarms low by sticking to real bugs you should fix.

### How much does it cost, and who's it for?

Pricing is token-based (like charging per word processed), averaging $15-25 per pull request review based on code complexity—premium but efficient for high volume. It's for Claude for Teams and Enterprise customers in research preview, targeting big users like Uber, Salesforce, Accenture generating tons of AI code. Not free or for individuals yet; engineering leads enable it team-wide.

### How is this different from other AI code tools?

Unlike basic AI feedback that annoys devs with non-actionable nags, Code Review uses multi-agent parallel processing for fast, focused logic/security checks with step-by-step explanations and fixes. It integrates seamlessly with GitHub for auto-comments on pull requests, scaling dynamically with changes. Pairs with Anthropic's new Claude Code Security for deeper vuln scans—rivals like GitHub Copilot don't emphasize this enterprise-scale review bottleneck fix.

### When can regular people or small teams use it?

Right now, research preview for paid enterprise/teams users only—no timeline for public or free access mentioned. But with "insane market pull" from Claude Code's growth ($2.5B run-rate), wider rollout seems likely soon, especially as Anthropic leans into booming business subs (quadrupled this year).

### Does this make AI-generated code safe enough for critical stuff like banks or hospitals?

It catches high-priority logic bugs and basic security risks before code merges, with customizable checks for company rules. Not foolproof—human oversight still key—but it dramatically reduces risks from AI's "flood" of code. Anthropic uses it internally for their systems; for critical apps, it complements deeper tools like Claude Code Security.

The bottom line

Anthropic's Code Review is a game-changer for taming AI's code explosion, ensuring the software explosion benefits you without the chaos. By automating smart, reliable reviews at $15-25 a pop for enterprises, it keeps apps stable, secure, and speedy—meaning fewer glitches in your daily digital life, from seamless Uber rides to hack-proof banking. As big companies adopt it amid Anthropic's enterprise boom, expect ripple effects: innovative features roll out faster, costs stabilize, and AI feels less like a wild robot and more like a trusted teammate. If you're not in tech, rejoice—this is invisible progress making your world run smoother. Keep an eye on rollouts; the future of reliable AI software starts here.

Sources

- TechCrunch: Anthropic launches code review tool to check flood of AI-generated code

- The New Stack: Anthropic launches a multi-agent code review tool for Claude Code

- VentureBeat: Anthropic rolls out Code Review for Claude Code

- CyberPress: Anthropic Launches Claude Code Security

- The Hacker News: Anthropic Launches Claude Code Security for AI-Powered Vulnerability Scanning

(Word count: 1,248)