Ulysses Sequence Parallelism Enables Training with Million-Token Contexts

Key Facts

- What: Hugging Face, Microsoft and the DeepSpeed team released Ulysses Sequence Parallelism (Ulysses SP), a new technique that allows efficient full-attention transformer training on sequences up to 1 million tokens.

- Who: Developed by the DeepSpeed team at Microsoft in collaboration with Hugging Face, building on the open-source DeepSpeed library.

- Performance: Achieves 4x larger sequence lengths than existing systems while maintaining a high fraction of peak compute performance.

- Scalability: Removes single-GPU memory limitations for both model size and sequence length, enabling near-indefinite scaling across thousands of GPUs.

- Availability: Implementation and tutorial released on Hugging Face and DeepSpeed repositories for integration with transformer models.

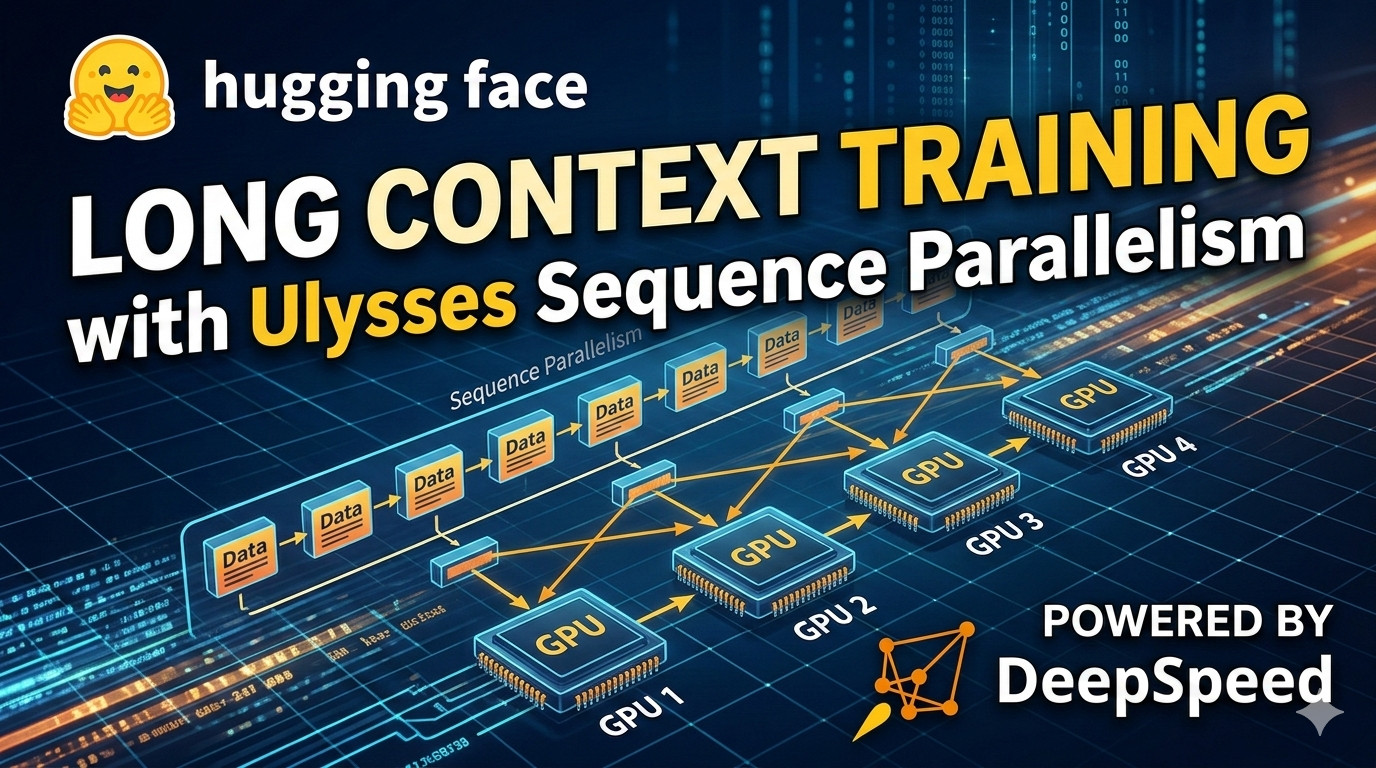

Hugging Face and Microsoft’s DeepSpeed team have introduced Ulysses Sequence Parallelism, a major advance in scaling transformer training to ultra-long contexts of up to one million tokens. The new technique addresses a critical bottleneck in large language model development by enabling efficient full self-attention on sequences far beyond the reach of standard parallelism methods. According to the announcement, Ulysses SP represents the current apex of efficient, scalable full-attention training on ultra-long sequences and generalizes across transformer architectures and scientific domains.

Traditional approaches to handling long sequences in transformers have relied on data parallelism, tensor parallelism for hidden dimensions, and pipeline parallelism for model depth. However, these methods leave sequence length as a primary constraint, typically limiting practical training to a few thousand tokens per GPU due to the quadratic memory and compute requirements of self-attention. Ulysses SP specifically targets this sequence-length dimension, allowing both model size and sequence length to scale without being bounded by single-GPU memory limits.

The technique builds on earlier work in sequence parallelism but introduces optimizations that dramatically improve efficiency and maximum context size. DeepSpeed-Ulysses can now handle sequences of over one million tokens—roughly 10 times longer than many previous systems—while delivering strong hardware utilization. This capability opens new possibilities for training models on extremely long documents, code repositories, scientific datasets, and other data types that naturally contain hundreds of thousands or millions of tokens.

Technical Details and Implementation

Ulysses Sequence Parallelism works by partitioning the sequence dimension across multiple GPUs, enabling the attention computation to be distributed while minimizing communication overhead. The approach maintains the mathematical correctness of full attention rather than relying on sparse or approximate attention mechanisms that some other long-context solutions use.

According to technical documentation from the DeepSpeed team, the system achieves these gains through careful optimization of all-reduce and all-gather communication patterns, memory-efficient attention kernel implementations, and integration with DeepSpeed’s existing ZeRO optimizer family. The result is a solution that not only supports much longer sequences but does so at a high fraction of theoretical peak FLOPS on modern GPU clusters.

The implementation released alongside the Hugging Face blog post includes integration with popular transformer libraries and provides examples for both pretraining and continued pretraining scenarios. Developers can now experiment with sequence lengths ranging from hundreds of thousands to over a million tokens using standard hardware configurations.

A key use case highlighted in the technical materials involves gradual context length expansion during training. For example, a large-scale training run on 1024 GPUs might begin with a sequence length of 8,192 tokens and a micro-batch size of 1, then progressively increase context length as training advances. This approach, supported by research such as Xiong et al. (2023), allows models to first learn from shorter contexts before expanding to longer ones without requiring massive memory footprints from the start.

Competitive Context and Industry Significance

The release comes at a time when the AI industry is intensely focused on extending context windows in large language models. Commercial offerings from OpenAI, Anthropic, Google and others have steadily increased context lengths, with some models now supporting hundreds of thousands of tokens in inference. However, training models from scratch or performing continued pretraining at these scales has remained extremely challenging and expensive.

Ulysses SP addresses the training side of this equation, potentially accelerating research into models that can truly understand and reason over book-length content, entire codebases, or long scientific papers in a single forward pass. The technique’s ability to maintain full attention—rather than relying on approximations—means researchers can explore long-context capabilities without the accuracy trade-offs that often accompany sparse attention methods.

The collaboration between Hugging Face and Microsoft’s DeepSpeed team underscores the growing importance of open-source infrastructure in pushing the frontier of AI capabilities. DeepSpeed has become a foundational library for efficient large-scale training, used by numerous organizations to train some of the world’s largest models. By making Ulysses SP available through both the DeepSpeed repository and Hugging Face’s ecosystem, the teams have ensured broad accessibility for researchers and developers.

Impact on Developers and Researchers

For developers and researchers, the practical implications are significant. Training with DeepSpeed-Ulysses removes the previous hard limits imposed by single-GPU memory, allowing teams to work with much longer sequences without requiring exotic hardware configurations or complex model sharding strategies beyond what DeepSpeed already provides.

The system’s efficiency at scale means that organizations with access to large GPU clusters can now realistically consider pretraining or fine-tuning models on datasets that were previously intractable. This could be particularly valuable in domains such as legal document analysis, scientific literature processing, software engineering, and genomics, where the natural unit of analysis often spans tens or hundreds of thousands of tokens.

Early benchmarks shared in the announcement materials indicate that Ulysses SP achieves 4x larger sequence lengths compared to existing systems while maintaining competitive training throughput. This efficiency gain translates directly into reduced training time and lower cloud computing costs for teams working on long-context models.

The generalization properties of the technique are also noteworthy. Rather than being limited to specific model architectures, Ulysses SP works across a wide array of transformer designs, making it applicable to both language models and specialized models in other scientific domains.

What's Next

The release of Ulysses Sequence Parallelism is likely to accelerate research into even longer context lengths and more efficient training methods. The DeepSpeed team has indicated that the current implementation represents a significant milestone but that further optimizations and extensions are already in development.

Looking ahead, the combination of training-time sequence parallelism with emerging inference-time techniques for handling million-token contexts could enable a new generation of AI systems capable of processing and reasoning over truly massive inputs. This has profound implications for applications ranging from comprehensive code understanding to multi-document scientific reasoning.

The open-source nature of the release means the broader AI research community can build upon these advances, potentially leading to rapid iteration and improvement of long-context training techniques. Integration with popular frameworks and libraries is expected to expand in the coming months as the ecosystem adopts the new capabilities.

As models continue to grow in both parameter count and context length, technologies like Ulysses SP will be essential for keeping training computationally feasible. The announcement represents an important step toward making million-token context training a practical reality rather than a theoretical aspiration.