Ulysses Sequence Parallelism: Train Million-Token Models Without Running Out of GPU Memory

Why this matters for builders

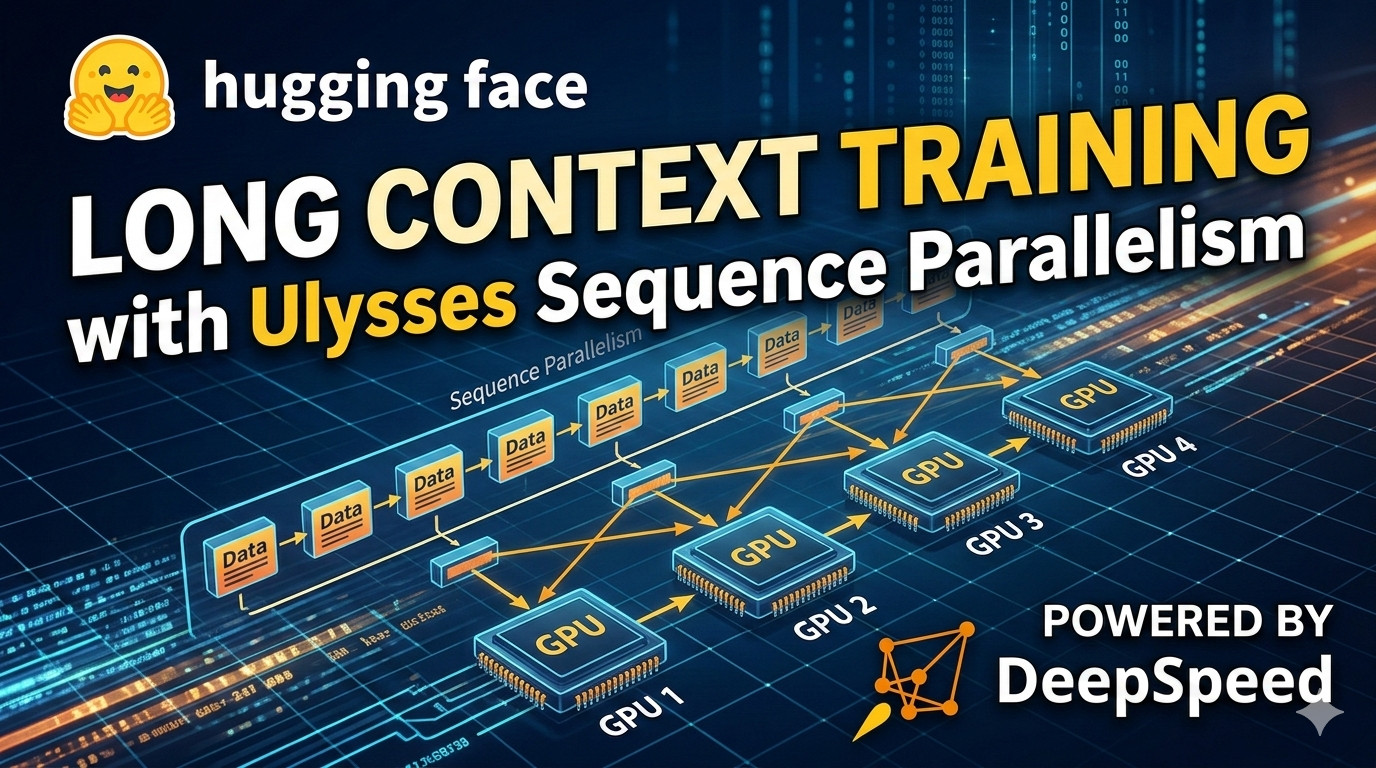

DeepSpeed-Ulysses (Ulysses Sequence Parallelism) lets you train full-attention transformer models on sequences of 1 million tokens or more by splitting the sequence dimension across multiple GPUs while keeping communication overhead extremely low.

The technique, released jointly by Hugging Face and Microsoft DeepSpeed, delivers 4× longer sequences than previous systems and maintains a high fraction of peak GPU performance. For the first time, builders can realistically fine-tune or continue-pretrain models on legal contracts, scientific papers, long video transcripts, or genomic sequences that were previously impossible to fit in GPU memory.

When to use it

- You need full self-attention (not sparse or linear approximations) on sequences > 128k tokens

- You are running on 8+ GPUs and want near-linear scaling in sequence length

- You are doing continual pre-training where context length grows over time

- You work with domain-specific long-context data (code repositories, arXiv papers, EHR records, etc.)

- Existing context-extension tricks (YaRN, NTK, RoPE scaling) are no longer enough for your accuracy targets

The full process

1. Define the goal (1–2 hours)

Start by writing a one-page spec. Answer these questions:

- Exact maximum sequence length target (e.g. 512k or 1M)

- Model architecture and size (Llama-3-8B, Mistral-7B, custom encoder, etc.)

- Number of GPUs available and their memory (H100 80 GB vs A100 40 GB)

- Training objective: full pre-training, continual pre-training, or supervised fine-tuning

- Acceptable throughput target (tokens/sec/GPU)

Prompt for your coding assistant:

You are an expert MLOps engineer. Help me scope a DeepSpeed-Ulysses training job.

Target: train a Llama-3-8B model on 512k token sequences using 32× H100 GPUs.

We want full attention, no approximations.

Output:

- Recommended DeepSpeed config (ZeRO stage, Ulysses parameters)

- Expected memory per GPU

- Rough tokens/sec estimate

- List of required code changes to a standard Hugging Face Trainer script

2. Scaffold the training codebase (2–4 hours)

Use the official DeepSpeed-Ulysses starter as your base. The canonical example lives in the DeepSpeed repository and the Hugging Face blog.

Starter template prompt:

Create a complete training script skeleton using DeepSpeed-Ulysses for sequence parallelism.

Requirements:

- Hugging Face Transformers + DeepSpeed integration

- Sequence length = 524288 (512k)

- Ulysses degree = 8 (sequence split across 8 GPUs)

- ZeRO stage 3 + CPU offload

- FlashAttention-2 where possible

- Logging with Weights & Biases

- Checkpointing that survives preemption

- Ability to resume from a previous 8k context checkpoint

Output the full directory structure and the three most important files with code.

Typical directory after scaffolding:

ulysses-longtrain/

├── train.py

├── ds_config.json

├── model_utils.py

├── data/

│ └── long_context_collator.py

├── scripts/

│ └── launch.sh

└── wandb/

3. Implement carefully (4–8 hours)

Key code pieces you will write or adapt:

ds_config.json (critical)

{

"train_batch_size": 1,

"train_micro_batch_size_per_gpu": 1,

"steps_per_print": 10,

"zero_optimization": {

"stage": 3,

"offload_optimizer": { "device": "cpu" },

"offload_param": { "device": "cpu" }

},

"sequence_parallelism": {

"enabled": true,

"degree": 8,

"sequence_parallel_type": "ulysses"

},

"fp16": { "enabled": true },

"gradient_accumulation_steps": 4,

"gradient_clipping": 1.0

}

Launch script (scripts/launch.sh)

deepspeed --num_gpus=32 train.py \

--deepspeed ds_config.json \

--model_name_or_path meta-llama/Meta-Llama-3-8B \

--max_seq_length 524288 \

--per_device_train_batch_size 1 \

--learning_rate 2e-5 \

--num_train_epochs 1

Data collator – the most common source of bugs. Ulysses expects the sequence dimension to be divisible by the Ulysses degree. Add explicit padding and masking logic.

Use the official LongContextDataCollator pattern shown in the DeepSpeed tutorial.

4. Validate locally before burning cloud money

Validation checklist:

- Run a smoke test with sequence length 32k on 2 GPUs first

- Confirm that

torch.cuda.max_memory_allocated()stays under 75% per GPU - Check that attention masks are correctly broadcast across sequence-parallel ranks

- Verify loss curve is sane (no NaNs after first 50 steps)

- Measure tokens/sec and compare against DeepSpeed’s published numbers

Prompt for debugging:

I am getting OOM at sequence length 262144 with Ulysses degree 4 on 8×H100.

Here is my ds_config and the error traceback.

Suggest the minimal set of changes to make it fit while keeping full attention.

5. Ship it safely

Production checklist before full 1M run:

- Use

deepspeed --includeto isolate a small ring of nodes for initial 100-step runs - Enable

save_stepsevery 200 steps withsave_total_limit=2 - Add a preemption handler that saves optimizer state (DeepSpeed does this automatically with ZeRO-3)

- Monitor with

deepspeed.monitoror Weights & Biases system metrics - Start with a 128k run, then 256k, then 512k, then 1M — each time loading the previous checkpoint and increasing context length gradually (this is the recommended continual pre-training pattern)

Pitfalls and guardrails

### What if I get "sequence length not divisible by ulysses degree"?

Make sure max_seq_length % ulysses_degree == 0. Choose 524288 for degree 8 or 1048576 for degree 16.

### What if communication overhead kills my throughput?

Ulysses is designed to be communication-efficient. If you see low MFU, check that you are using all-to-all only on the sequence dimension and that FlashAttention-2 is enabled. Avoid mixing tensor parallelism with high Ulysses degree unless you have 64+ GPUs.

### What if my model diverges when jumping from 8k to 512k context?

Use gradual context extension: train at 32k → 64k → 128k → 256k with learning rate annealing between each stage. Many teams report this is required for stability.

### What if I only have 8 GPUs?

You can still use Ulysses degree 4 or 8 by setting a very small micro-batch size (1) and aggressive offloading. Expect lower throughput but it will work.

What to do next

- Run your first successful 128k training job (celebrate)

- Measure exact tokens/sec/GPU and compare to the numbers in the DeepSpeed blog

- Increment context length by 2× and repeat

- Once stable at target length, switch to your real domain dataset

- Export the final checkpoint and evaluate on your long-context downstream tasks (needle-in-haystack, long-document QA, etc.)

Sources

- Hugging Face Blog: Ulysses Sequence Parallelism – Training with Million-Token Contexts (https://huggingface.co/blog/ulysses-sp)

- DeepSpeed-Ulysses Official Tutorial (https://www.deepspeed.ai/tutorials/ds-sequence/)

- DeepSpeed GitHub – blogs/deepspeed-ulysses (https://github.com/microsoft/DeepSpeed/blob/master/blogs/deepspeed-ulysses/README.md)

- ArXiv paper: DEEPSPEED ULYSSES: SYSTEM OPTIMIZATIONS FOR ENABLING TRAINING OF EXTREME LONG SEQUENCE TRANSFORMER MODELS (https://arxiv.org/pdf/2309.14509)

(Word count: 942)

This guide gives you a repeatable, AI-assisted workflow that turns the Ulysses announcement into production-grade long-context training capability in a single sprint.