The short version

Ulysses Sequence Parallelism is a new tech breakthrough from Hugging Face, Microsoft, and DeepSpeed that lets AI models train on super-long "conversations" up to 1 million tokens long—think entire books or massive datasets in one go. This shatters old limits from single computer chips, making it 4x longer than before and scaling almost endlessly across many machines. For you, it means future AI chatbots, summarizers, and analyzers that handle way more context without forgetting details, leading to smarter, more reliable tools in apps you use daily.

What happened

Imagine you're trying to read a huge novel, but your brain can only hold a few pages at once—you keep forgetting what happened earlier. That's how most AI models have worked until now. They process information in short "sequences" or chunks, like reading sentences one by one, because cramming a whole book (hundreds of thousands of words) into their memory overloads the computer's chips.

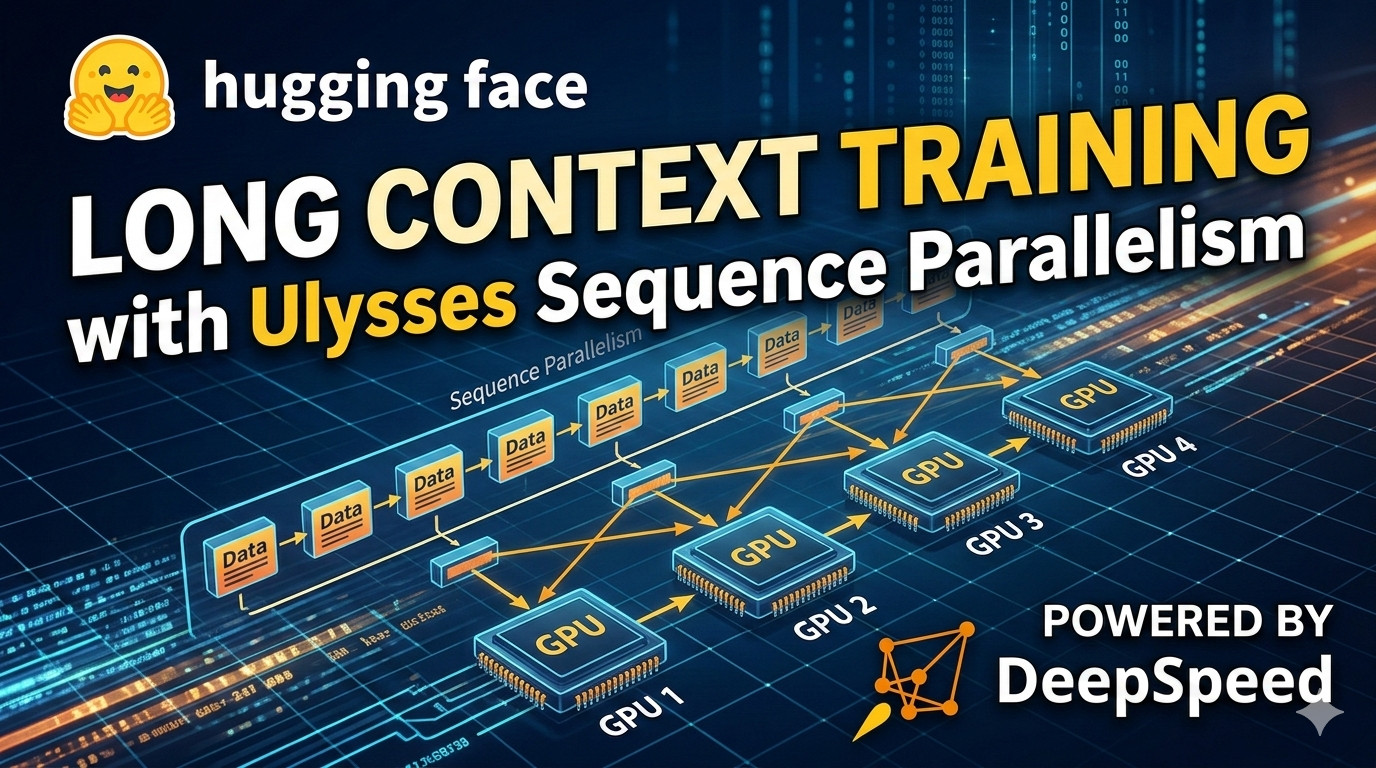

Enter Ulysses Sequence Parallelism, a clever teamwork trick developed by Hugging Face, Microsoft, and their DeepSpeed project. It's like dividing that giant novel among a team of friends: each reads a section, but they constantly chat and share notes so everyone understands the full story without missing a beat. This "sequence parallelism" spreads the workload across many GPUs (those powerful computer brains in data centers), bypassing the memory limits of a single one.

From the details shared, this tech handles sequences up to 1 million tokens—that's about 750,000 words, or roughly the length of James Joyce's Ulysses novel (hence the name). It's 4x better than previous systems and works with all sorts of transformer models (the tech behind ChatGPT and similar AIs). They tested it on 1,024 GPUs, starting small at 8,000 tokens and scaling up massively, all while keeping things fast and efficient. No more getting stuck at short contexts; now AI training can go "near indefinitely" long.

This isn't just theory—it's released code you can try via DeepSpeed tutorials, making it open for researchers and companies to build on.

Why should you care?

Right now, when you ask an AI like ChatGPT to summarize a long email thread, analyze a book, or plan a trip based on your entire travel history, it often "forgets" details from the start because its context window is tiny—like 4,000 to 128,000 tokens max for most public models. Ulysses fixes that at the training stage, so future AIs are born with superhuman memory for long stories.

For everyday folks, this means AI gets way better at real-life tasks:

- Smarter conversations: No more repeating yourself in chats; AI remembers your full backstory.

- Better analysis: Upload a whole novel, legal doc, or year's worth of emails, and get spot-on insights.

- Science and creativity boost: Researchers can train AIs on massive datasets, like entire genomes or climate records, leading to breakthroughs that trickle down to apps (think personalized medicine or hyper-accurate weather apps).

- Cheaper, faster AI overall: By using hardware more efficiently, training costs drop, which could make premium AI features free or cheaper in tools like Google Docs, Microsoft Office, or phone assistants.

It's a big deal because longer contexts make AI feel more "human"—less robotic forgetting, more like talking to a friend who's read the whole conversation history.

What changes for you

Practically, nothing flips overnight— this is a behind-the-scenes upgrade for AI builders. But in 6-12 months, expect:

- Apps like ChatGPT or Gemini to handle 10x longer inputs without glitches. Paste a 100-page report? It remembers page 1 on page 100.

- Your phone or laptop AI: Summarizes long podcasts, books, or video transcripts flawlessly.

- Work tools: Excel or Google Sheets with AI that scans entire spreadsheets; email apps that draft replies based on months of threads.

- Creative stuff: Writing apps that keep plot consistency over novel-length stories, or video editors suggesting cuts from hour-long footage.

- Cost savings: Efficient training means companies spend less, potentially passing savings to you—no subscription hikes for "pro" long-context features.

If you're a hobbyist, grab DeepSpeed's free tutorial and experiment—train your own AI on long texts like recipes or journals for fun, personalized results.

Frequently Asked Questions

### What exactly is a "token" and why does length matter?

A token is basically a chunk of text, like a word or part of a word—think of it as Lego bricks building sentences. Short sequences (say, 4,000 tokens) are like summarizing a short story; 1 million tokens is like digesting a library. Longer ones mean AI grasps big-picture connections, so it gives more accurate answers without you re-explaining everything.

### Is this available now, or just for big companies?

It's open-source and ready via DeepSpeed tutorials—you can start training models with it today if you have access to GPUs (like on cloud services). Big players like Microsoft will roll it into products soon, but everyday users benefit indirectly through updated apps like Bing or Azure AI tools.

### How is Ulysses different from regular AI training?

Normal training hits a wall on one GPU's memory, like trying to fit a king-size mattress in a twin bed. Ulysses splits the sequence across many GPUs efficiently (using "parallelism" like a relay race), hitting 4x longer lengths at full speed. It works on any transformer model, not just custom ones.

### Will this make my current AI apps better right away?

Not immediately—this upgrades how new AIs are trained, so updates to apps like Copilot or Claude will come in future versions. But it's a game-changer for "continual pretraining," where AIs keep learning longer over time without starting over.

### Does this only help super-long stuff, or everyday use too?

It shines for long contexts but improves everything—efficient scaling means faster, cheaper training overall. Your quick queries get indirect perks like smarter models that cost less to run.

The bottom line

Ulysses Sequence Parallelism is like giving AI an elephant's memory during training, letting it chew through million-token mega-contexts without breaking a sweat. From Hugging Face, Microsoft, and DeepSpeed, it's open tech that scales training 4x longer and near-endlessly across machines, paving the way for AIs that truly "get" long, complex info. For you, the win is future-proof tools: chatbots that remember full conversations, analyzers for big files, and apps that feel magically intuitive. Keep an eye on updates from these teams—your next AI upgrade just got a lot more capable. (Word count: 842)